Link back to Home page

Link to typed notes

1. Into to Stats

Mean

Median: the middle value in an ordered list of observations

Model the most frequent value in the data

SSE = sum of squares of errors

SAE = sum of absolute errors

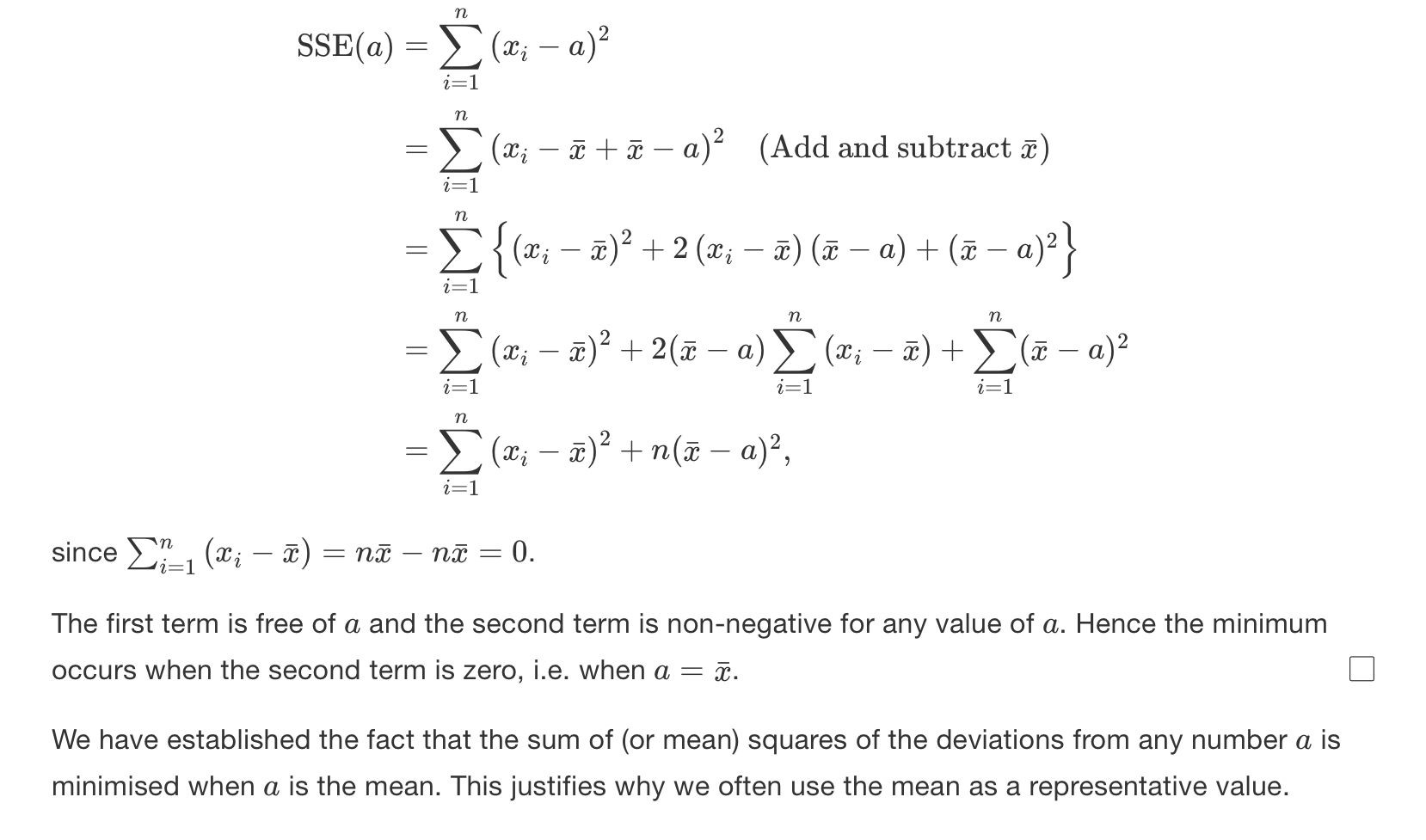

Theorem:

Theorem: median minimizes the SAE

The main idea is to look at slopes.. and then just find the value where the gradient changes from negative to positive

Proof is long and convoluted, link to it here, at some point it will make sense, until then, link here

Measures of spread

IQR = Interquartile range, difference between

The variance is:

Notice how the

And we found SSE is minimized at

So

To calculate the mean, we just divide by the the total population:

There is a reason we do

Proof of variance (not examined by still good for understanding)

However, there is a more useful form of the variance, the proof of which is given below:

Then we split the sum:

As the

Now using the definition of the mean:

So to rearrange

Therefore:

The standard deviation is the square root of variance

2. Intro to Probability

Definitions

Definition Random experiment: one in which we do not know exactly what the outcome of the experiment will give.

Definition Sample space - the set of all possible outcomes. Denoted by capital

Definition Event - particular result of the random experiment, a subset of the sample space. Denoted by capital letters

Everything that is random is denoted by a capital letters. Lowercase is where we have observed

Definition Union -

Definition Intersect -

Definition Mutually exclusive -

Definition Complement -

The statement above basically means that everything in A and not A is the sample space

Axioms of probability

Combinatorics

The entire point of this section is fairly simple: being able to count outcomes without listing them all

There are two main questions that will determine which formula we have to use.

- Does the order matter? If it does, then we use permutations, otherwise combinations

- Do we replace items after choosing? If yes, with we use the with replacement cases

Permutations

The scenario here is that we have

Think of it like filling

- The first sport can take

choices - The second spot can take

choices since the first one is already used - The third can take

choices and so on...

So the total number of ordered arrangements (aka permutations) is:

Multiply numerator and denominator by

Combinations

Now suppose we want to choose

In other ways, when we go from permutations to combinations, we don't care what order the items are chose in, only which items were chosen. So as we've already counted the same group multiple times for every possible order with permutations, we need to divide by how many times we've 'overcounted', which is

Conditional probability and Bayes’ Theorem

At its simplest, probability is just chance of an event, so it's:

Conditional probability is the probability event

The formal definition is given below:

When we arrange, we can get:

Bayes’ Theorem

Now, when we know something about one conditional event, but want to know about the reverse, we can use Bayes' Theorem:

We can derive the numerator by just substituting

Bayes' Theorem is used widely when in medical test scenarios as it allows people to update their belief about an event after new evidence appears. To think about using an analogy:

- We start with a prior belief

- Get new evidence

- And then we update our belief to

Independence

Intuitively, events

Knowing that one event happened gives you no new information about the other.

Notice how this is different to mutually exclusive, which means that both events cannot happen at the same time, so

Formally,

If

Then

Proof of Independence of complementary events

If

We want to show the following below:

And how using using the general addition rule, we can rewrite the above as

Given that

This can be factorise as:

Which simplifies to

3. Probability Distributions

Random variables are denoted by uppercase letters, like

A random variable is just a mapping from the outcomes space to the real line, so the combined probability of all values of a random variable is 1

Discrete Random Variable (DRV)

Definition

DRV - discrete random variable. If a random variable has a finite value

A DRV takes specific values, like heads in coin flips and we can count how many outcomes there are. We assign probabilities to each value individually:

And so to find the total probability up to some value

For a DRV, we define a function $$ f(x) = P(X=x) $$

The function

Continuous Random Variable (CRV)

Definition

CRV - Continous random variable. When a variable can take any value on the real line

There are infinitely many possible values, so the probability of hitting any exact number,

So instead, we can talk about densities, the probability per unit of

Probability Density Function (PDF)

This function essentially tells us how densely probability is packed around each value.

This is defined as:

That's why for CRVs, integration replaces summation, it's the continuous version of adding up infinitely small pieces.

For the pdf, we need:

Cumulative distribution function (CDF)

This function calculates the probability of the random variables up to its arguments:

For a DRV, it's defined as:

And for a CRV, the CDF is defined as:

So the CDF is essentially the area under the PDF curve up to

Relationship between PDF and CDF

For a CRV, the PDF is the derivative of the CDF

If

And we differentiate both sides with respect to

What this tells us that the PDF is just the slope of the CDF. Where the CDF rises, the PDF is large, lots of probability density there. And where the CDF is flat, the PDF is small.

Expectation

Think of expectation (or the expected value) as the long-run average value of a random variable if you repeated the experiment infinitely many times.

For example, if you roll a fair rice, the values are in the set of

Each roll has probability

Then

You'll never roll a

Formal definition

For a DRV with possible values

And for CRV with PDF

Same idea, we're just replacing the sum with an integral because now the variable takes infinitely many values

Expectation of a uniform distribution function

Say we have

The PDF is:

Then the expectation is:

Which is just the midpoint of the interval

Expectation of a function

If you have some function of the random variable say

- Discrete:

- Continuous:

This is extremely useful as it lets us find things like:

which is later needed for variance - etc.

Linearity of expectation

this is probably one of the most useful properties in probability.

For any random variable

The reason we can do this is because expectation acts like a weighted average. If we stretch all our data by a factor of

Proof of linearity

For a DRV:

But since

And now we can distribute the sum:

The first part of the sum is just

So we have:

For a CRV:

And again, as

The first part is again just

Note on notation here: capital

Expectation of symmetric random variable

A random variable

If

Intuitively, think of this as the centre of mass of the function. If the PDF or PMF is symmetric about a point

Proof for symmetric random variable

First, let

Then we have:

We can substitute

As as

Variance

We already saw that the expectation

If we wanted to measure how far (deviated) each possible value of

For very large

So when

This variance is therefore defined by:

Proving variance

There is a more useful form of variance, which we can prove from the definition below:

After expanding the bracket

Take the expectation of both sides:

Using the linearity of expectation, we can split and pull constants out to get:

After simplifying we have:

Linearity of variance

If

- Scaling by

stretches or shrinks the spread - Adding

shifts everything, so no change in spread

To prove this, we can use the definition of variance and some algebra

After substituting in for

We can do some cancelling of the

As

Sample median of random variables

Quantiles

For any

Standard Discrete Distributions

Bernoulli Distributions

Bernoulli trails are a name for a set of independent trials, where each trial has only two possible outcomes- success, and failure.

For a Bernoulli trial:

The Bernoulli distributions has pmf:

For this distribution, the expectation is:

And the variance is:

Binomial distribution

Defined as:

If

PMF for the Binomial

To find the PMF, we want to find

The probability of the outcomes can be given by:

So to generalize,

The pmf is:

Now one might wonder how we know that $$ \sum_{x=0}^{n}f(x) = 1$$

… by using the binomial theorem

Now if we choose

Expectation and Variance of a Binomial function

Intuitively, think of

Let

Where

Now, we know that for a single trial, the expectation of a Bernoulli variable is

And since each expectation is linear, the expectation of the sum is

Where each

So we get that expectation of a binomial is

The variance is

The proof of this is left as an exercise to the reader

Geometric Distribution

Intuitively, the geometric distribution is the first one that captures the "keep trying until you succeed" idea. So imagine we're running an experiment where:

- Each trial is independent

- Each trial has a probability of success

- We keep repeating until we get our first success

The random variable

PMF for a geometric distribution

To get a success on the

CDF

Note - R assumes the number of failures until first success, instead of the number of trials until the first success. So if

Memoryless distribution

The geometric distribution is memoryless, i.e

This means just that the probability we still have to wait for

Expectation of a geometric distribution

For the intuition, imagine playing a game where:

- Every trial has a chance

of success

How many tries do we expect to need before success?

If

If

So the average should:

- Decrease when

increases - Increase when

decreases

The proof involves using negative binomial series, something which will not be covered in lectures, but it’s in the notes. A much nicer proof is covered in 2nd year Statistical Inference module

Variance of a geometric distribution

Going back our previous analogy, the variance here is how spread out the time between our successes. So if

Hypergeometric Distribution

This distribution is all about sampling without replacement, i.e. the realistic binomial

Suppose we have a population of

- A proportion

are of type (success) - The remaining

are of type (failures)

So the total number of type

Now, we want to sample

Then it's PMF is given by:

The numerator counts how many ways to get exactly

Expectation

Notice how the expectation is the same as the binomial distribution, and that's because we are drawing without replacement

Variance

Negative Binomial Distribution

The negative binomial distribution models the number of trials needed to achieve a fixed number of successes,

If we just wanted

PMF

Imagine flipping a coin where

Expectation

Each success takes

Variance

Poisson Distribution

The Poisson distribution models the number of times an event happens in a fixed interval. It includes things like the number of emails you might get per hour, we can't predict exactly when then event happens, but over many intervals, the average rate stays the same.

So formally, It's the limit as

is large is small and is moderate

PMF

The PMF of this function can be derived by taking the limit of the binomial where we want to rearrange this and substitute for

Variance

If events occur randomly and independently, then the variability of counts around the means grows proportionally to the mean. So, the mean = variance in this case

Therefore, the expectation and variance are the same for the Poisson distribution

Uniform Distribution

PDF - as this is a continuous distribution

Expectation

Variance

Exponential Distribution

This distribution is bit like a continuous version of the geometric distribution, and it connects really nicely with the Poisson process. The exponential models the waiting time until the next event in a Poisson process. So if:

- events occur randomly and independently in time

- At a constant average rate

(events per unit time)

Then the waiting timebetween two successive events is:

So the Poisson counts how many events occur in a fixed time interval, whereas the exponential measures how long between events

The full proof involves the gamma function, which is given is the official lecture notes

Expectation

If events occur at

Variance

This one isn't immediately obvious, but the spread of waiting time grows as

CDF

The cdf for

Quantiles

As talked about earlier, quantiles tells us how far along the probability distribution we have to go to capture a certain proportion of data. So for a CRV

So we're solving for the

For the exponential distribution, recall that the cdf is

And that's our quantile function

Memorylessness (Again!)

The exponential distribution is also memoryless, as it’s just a continuous version of the geometric.

So the probability that we want to wait at least

Normal Distribution

The CDF is just

3b1b has some good videos on this

To show that sum of all probabilities is

And the standard normal

Expectation

Variance

Linear Transformation

We can also standardise any normal to get

Probabilities using tables

Given the standard normal distribution with

So if we want probability for

And then we ca use the normal CDF

Log-Normal Distributions

If

Expectation

Variance

Joint Distributions

Up to now, we've mostly dealt with one random variable at a time, eg:

And we want to know how these two relate, and that's essentially what joint distributions are about

So when we have two random variables,

Discrete Case

IF

This would like a table of probabilities, one for each combination of

- non negative

- sum to

across all

Continuous case

If

The method above uses bivariate integration, which is just the same as finding the volume under a curve if we think about integration as finding the are under a curve.

Marginal Distributions

Each random variable has its own individual distribution, even when considered together. And these are called marginal distributions. This is because we get them by summing or integrating the joint distributions along the margins.

Discrete

This basically means to get the probability of

Continuous

Expectation of

Now consider we want to find the average (expected) value of something that depends on both

This is the same as the single-variable version, but now in two dimension

Covariance

Covariance tells us how two random variables move together

The intuition behind this is essentially:

- If

and increase together - then covariance is positive - If one goes when the other goes down, it's negative

- If they move independently, then covariance is around

Correlation - normalised covariance

Correlation then, is just the scaled version of covariance so its unit free and lies between

- If the correlation is

, then there is a positive linear relationship - If it's

, then it's a negative linear relationship - If its

, then there is no linear relationship

I can’t be bothered enough to do a proof for this, so yeah.

So correlation just shows how strong and in which direction

In function terms two random variable are independent if knowing one tells us nothing about the other:

So if

Sums of Random Variables

Independent Binomial Random Variables

If

The proof is in notes, but otherwise this makes intuitive sense.

IF

Then:

This is actually a pretty neat way to derive the expectation and variance for the Binomial and the negative binomial distribution: